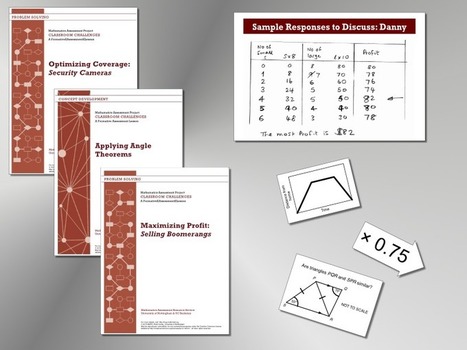

Classroom Challenges are lessons that support teachers in formative assessment. There are 100 lessons in total, 20 at each grade from 6 to 8 and 40 for ‘Career and College Readiness’ at High School Grades 9 and above. Some lessons are focused on developing math concepts, others on solving non-routine problems.

Get Started for FREE

Sign up with Facebook Sign up with X

I don't have a Facebook or a X account

| Tags |

|---|

Your new post is loading... Your new post is loading...

Your new post is loading... Your new post is loading...

This special report delves into the convergence of education and artificial intelligence, offering a comprehensive guide for educators navigating this transformative landscape. Via EDTECH@UTRGV

While new and perhaps useful, ChatGPT lacks the substance educators should be encouraging in their students' writing. Via EDTECH@UTRGV

This post is loaded with some simple ways to integrate AI in the classroom. It's easier than you think, and it doesn't require expensive equipment or software. AI or Artifical Intelligence is ready for its closeup! Are you and your students ready to become "AI experimenters."

Via Yashy Tohsaku

Decktopus is an AI presentation maker, that will create amazing presentations in seconds. You only need to type the presentation title and your presentation is ready. Via Nik Peachey

Nik Peachey's curator insight,

July 17, 2023 6:21 AM

Answer a sequence of questions, and then this AI tool will produce a presentation deck for you.

"The phrase “artificial Intelligence” was coined by pointy-heads at MIT in 1955. Back then, it referred to an obscure field of computer science devoted to then-hypothetical programs that could engage in tasks that “require high-level mental processes such as: perceptual learning, memory organization, and critical reasoning.”

Fast-forward to 2023: While AI has been a murmur in tech circles for the last few years, those conversations really get loud until the commercial release of products like Chat GPT and DALL-e. Now everyone is talking about AI, everywhere you go—hyping it, demonizing it, fearing it—but most of all, misunderstanding it.

This is partly because it’s a complex subject—we don’t even agree on what “intelligence” is, let alone “artificial intelligence”—but another reason so many are getting AI wrong essentially comes down to that familiar villain capitalism. With the explosion in popular interest, advertisers and marketers are using terms like “AI,” “AI-powered,” and “artificial intelligence” as a selling point so much, they’re beginning to lose what little meaning they once had. When you read that something is “AI-powered,” don’t believe the hype. Via Alfredo Calderón

From

slate

A decade after its widespread adoption, it’s safe to say that U.S. schools utterly failed to acclimate our students to social media and to anticipate the profound damage it could do. The result of widespread social media use among students (said the U.S. surgeon general in a recent advisory) is increased anxiety, stress, and depression. Parents are lost, too. As a school technology specialist working with a population of middle- and high-schoolers, I see firsthand parents’ desperation when I host standing-room-only sessions about social media and mental health.

Explore how using AI chatbots can transform your school year! Dive into some of the tips in my new quick reference guide for educators.

Via Yashy Tohsaku

From

www2

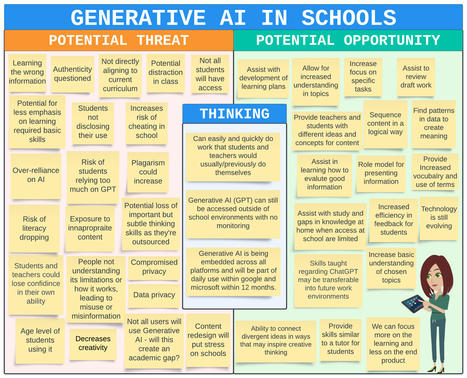

Today, many priorities for improvements to teaching and learning are unmet. Educators seek technology-enhanced approaches addressing these priorities that would be safe, effective, and

Educators are also aware of new risks. Useful, powerful functionality can also be accompanied with new data privacy and security risks. Educators recognize that AI can automatically produce output that is inappropriate or wrong. They are wary that the associations or automations created by AI may amplify unwanted biases. They have noted new ways in which students may represent others’ work as their own. They are well-aware of “teachable moments” and pedagogical strategies that a human teacher can address but are undetected or misunderstood by

Educators’ concerns are manifold. Everyone in education has a responsibility to harness the good to serve educational priorities while also protecting against the dangers that may arise as a Via Edumorfosis, Jim Lerman

Artificial Intelligence (AI) has taken the world by storm, with new AI-powered tools such as ChatGPT opening up new opportunities in higher education for content creation, communication, and learning, while also raising new concerns about the misuses and overreach of technology. Our shared humanity has also become a key focal point within higher education, as faculty and leaders continue to wrestle with understanding and meeting the diverse needs of students and to find ways of cultivating institutional communities that support student well-being and belonging.

For this year’s teaching and learning Horizon Report, then, our panelists’ discussions oscillated between these seemingly polar ideas: the supplanting of human activity with powerful new technological capabilities, and the need for more humanity at the center of everything we do. This report summarizes the results of those discussions and serves as one vantage point on where our future may be headed. Via Edumorfosis

nikabrand's curator insight,

October 20, 2023 4:59 PM

Comprar Mounjaro sin receta en España puede ser tentador, pero es crucial comprender las implicaciones. Mounjaro es un medicamento que generalmente se receta para tratar afecciones médicas específicas, acquista subutex online koop ozempic Nederland https://ozempickopen.com/

The report by Dr. Vivek Murthy cited a “profound risk of harm” to adolescent mental health and urged families to set limits and governments to set tougher standards for use.

Generative art—art produced by AI—means you no longer need to be an accomplished artist to produce fantastic images in whatever style you choose. To create AI art, you would typically create an account with a third-party company and have generated images served to you over the web. |

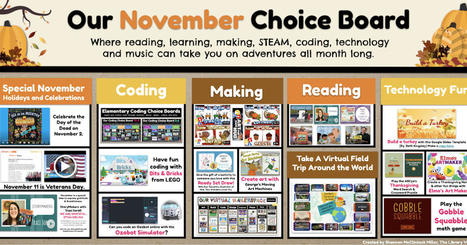

It's here, friends! Our November Choice Board where reading, learning, making, coding, technology and music can take you on adventures all month long.

Recent developments in artificial intelligence are changing how the world sees computing and challenging computing educators to rethink their approach to teaching. In this issue of Hello World, we tackle the big questions about AI and computing education, such as what AI literacy is and how we teach it. Our writers explore a range of topics including gender bias in AI and what we can do about it; how to speak to young children about AI; and why anthropomorphism hinders learners' understanding of AI. Our feature articles also include a research digest on AI ethics for children, and of course practical examples of how you can incorporate AI lessons in your classroom.

AI is a topic we’ve addressed before in Hello World, and we'll keep covering this rapidly evolving field in future.

Hello World issue 22 is a comprehensive snapshot of the current landscape of AI education.

Franck's curator insight,

November 4, 2023 11:49 AM

The development of AI should value and respect children's own thoughts, wishes, emotions, interests, self-esteem, etc., and should avoid harm to their personal dignity.

Through these efforts, educators can embrace the opportunities provided by technology and create dynamic and effective learning environments for all students. Via EDTECH@UTRGV

Our devices can be anything we want them to be. If we want them to be beguiling, and dangerous, they will end up as bogeymen. But we deserve better, as do our children. The solution to mental illness and a fraying social fabric will not be impractical, hobbled devices, or “unplugged” vacations that only the rich can afford. It will begin with a new, rational, national discussion of the way we live now, and the way we want to live, devices and all. Via Nik Peachey

Nik Peachey's curator insight,

October 7, 2023 2:18 AM

Debunking some of the myths around screen time.

nikabrand's curator insight,

October 20, 2023 4:59 PM

Comprar Mounjaro sin receta en España puede ser tentador, pero es crucial comprender las implicaciones. Mounjaro es un medicamento que generalmente se receta para tratar afecciones médicas específicas, acquista subutex online koop ozempic Nederland https://ozempickopen.com/

From

www

Artificial intelligence is arguably the most important technological development of our time – here are some terms you should know as the world wrestles with this new technology. Via Yashy Tohsaku

Understanding how to best support students can be confusing and complex. To deal with this complexity, we develop naive theories and unscientific beliefs about what helps students learn. The science of learning has tested many of these beliefs, and evidence now shows that some of our most common beliefs about learners are wrong. These misconceptions about learning may cause us to waste some of our instructional time and effort—or, even worse, impair students’ learning. Via Edumorfosis

nikabrand's curator insight,

October 20, 2023 4:59 PM

Comprar Mounjaro sin receta en España puede ser tentador, pero es crucial comprender las implicaciones. Mounjaro es un medicamento que generalmente se receta para tratar afecciones médicas específicas, acquista subutex online koop ozempic Nederland https://ozempickopen.com/

In this podcast episode, I interview Angela Daniel who works at the MIT Step Lab. In this in-depth interview, Angela discusses AI, deeper learning, and the need for students to think about the nature of

Via Yashy Tohsaku

From

phys

We believe teachers can use ChatGPT to increase their students' motivation for learning and actually prevent cheating. Here are three strategies for doing that.

Asking students not to use ChatGPT for their work is counterproductive in today’s landscape. As teachers, we should be educating them on HOW, WHEN and WHY to use it. Via Edumorfosis

EdTech: Educators & Learners's curator insight,

June 4, 2023 8:50 PM

Like everything, we must compare the 'threats' and 'opportunities' for everything regarding EdTech.

Indomed Educare's comment,

July 28, 2023 4:10 AM

Before encountering ChatGPT or any other AI, I used to ponder and write content. However, now I find myself not contemplating the writing process. If this continues, people may lose their ability to think critically, which could lead to dangerous consequences. https://www.indomededucare.com/

Well, here we are in May! Many of you have already finished out the school year, and several of you are in the final stretch. Whichever category you fall into, I hope you take a moment to try out these five new AI tools for educators. Whether you love it, loathe it, or aren’t quite sure about it, take a moment to give some AI tools a try to see what you think. For me, AI is becoming a trusty brainstorming buddy! Let’s dive in to this month’s collection.

We asked educators and experts on all sides of the broader debates about ChatGPT to give us some strategies for AI-proofing assignments. Here’s what they told us: |

![[PDF] Artificial Intelligence and the Future of Teaching and Learning | iPads, MakerEd and More in Education | Scoop.it](https://img.scoop.it/54KFvqjmeVxMnjcOzXhgIjl72eJkfbmt4t8yenImKBVvK0kTmF0xjctABnaLJIm9)

![[PDF] Horizon Report: Teaching and Learning Edition 2023 - Educause | iPads, MakerEd and More in Education | Scoop.it](https://img.scoop.it/Alu1_RA7eppSXNR_y92tuzl72eJkfbmt4t8yenImKBVvK0kTmF0xjctABnaLJIm9)